A Data Engineer's Guide to Scraping Websites With Java

If you've built a web scraper in Java, you've probably hit a wall. Most guides teach you basic HTML parsing, which is useless against modern anti-bot systems. Your scraper works for five minutes, then the IP bans, CAPTCHAs, and cryptic connection errors start rolling in. This is where most projects die—wasted engineering hours and burned proxy budgets, all because the guides were incomplete.

Gunnar

Last updated -

Feb 6, 2026

Tutorials

They never tell you about the real challenges: browser fingerprinting, TLS signatures, header consistency, and ASN reputation. Without mastering these, your scraper is dead on arrival.

This guide is different. We operate a massive proxy network, so we see firsthand what gets engineers blocked and what actually breaks through at scale. We're going to cover the operational reality of data extraction—the parts other tutorials conveniently leave out.

What is Java Web Scraping?

Java web scraping is the process of using Java libraries to automate the extraction of data from websites. It's not just about parsing HTML; it's about building resilient, high-throughput data pipelines that can navigate anti-bot defenses and operate reliably at scale. Success hinges on managing your digital footprint through proxies, headers, and browser emulation.

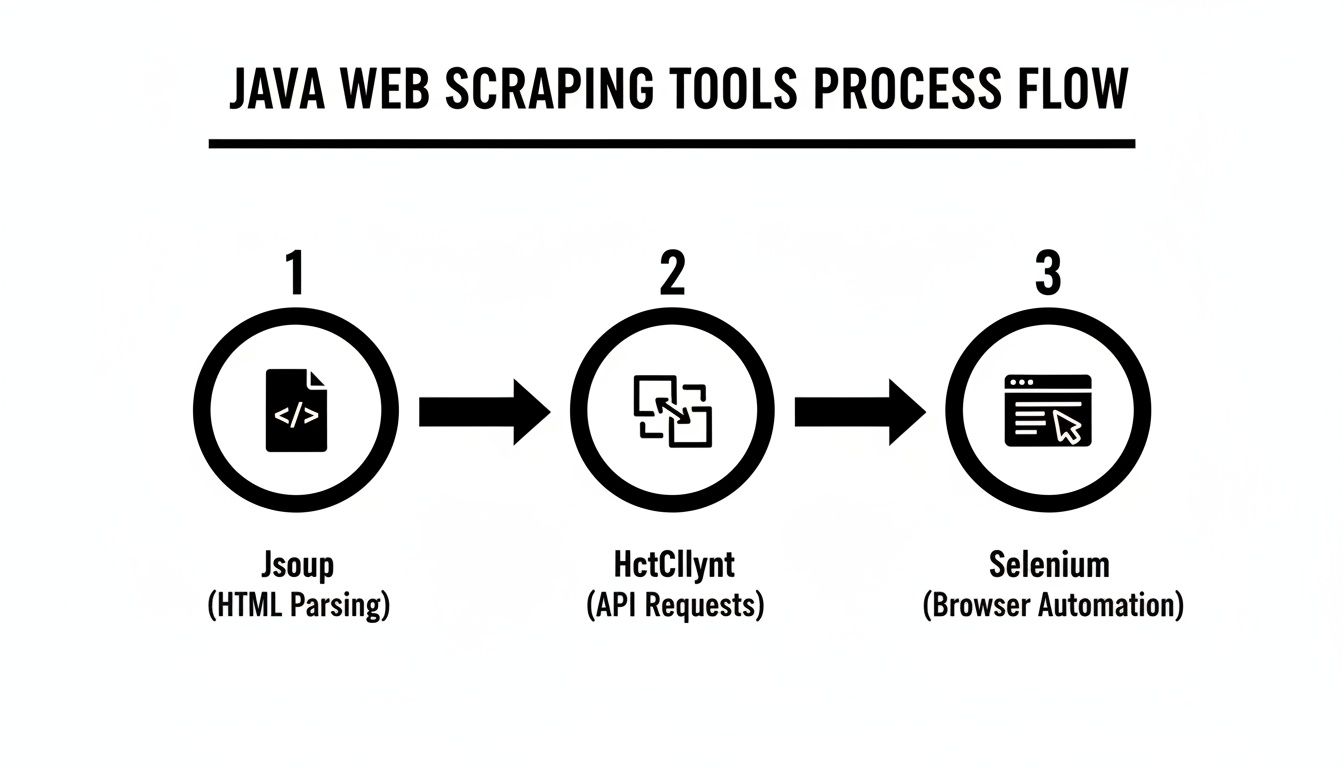

Choosing Your Java Scraping Toolkit: The Real Tradeoffs

Picking the right Java library is a critical decision with direct cost and performance implications. Your choice dictates speed, scalability, and your ability to handle JavaScript-heavy sites. Let's break down the main options from an operator's perspective. A solid grasp of the core Java ecosystem is your best asset here, as it helps you match the tool to the target's complexity.

Jsoup: For Raw Speed on Static HTML

Jsoup is an HTML parser. It's incredibly fast and has a low memory footprint because it doesn't execute JavaScript or render pages. It just parses text.

When It Works: Perfect for high-volume scraping of server-rendered websites like basic product listings or news archives. If you can see the data in "View Page Source," Jsoup will be the most efficient tool.

When It Fails: The moment a site uses JavaScript to load content dynamically, Jsoup becomes useless. It only sees the initial HTML skeleton, missing anything loaded asynchronously.

Operator's Take: Use Jsoup for mass-harvesting data from simple sites. Its tiny footprint means you can run thousands of concurrent threads on a single machine. But the second you hit a single-page application (SPA), it's the wrong tool.

Apache HttpClient: For Surgical API Scraping

HttpClient is not a parser; it's a library for making precise HTTP requests. It's your weapon of choice when the target data is fetched from a backend API, not rendered in the initial HTML. Your job is to find the API calls in your browser's developer tools and replicate them.

When It Works: Ideal for scraping mobile app APIs or web apps that use predictable

XHR/Fetchrequests to load JSON data. This method is faster and more reliable than browser automation because you bypass rendering entirely.When It Fails: It's useless if the API calls are protected by complex authentication tokens generated by client-side JavaScript. Reverse-engineering that logic is often a dead end.

Selenium WebDriver: For Full Browser Emulation

Selenium automates a real browser (like Chrome), executing JavaScript and rendering pages just like a human user. It's the heavyweight tool you use when nothing else works, but its power comes at a significant cost in performance and complexity.

When It Works: Essential for scraping complex SPAs, navigating interactive forms, or handling sites that require user actions like clicks and scrolls to reveal data. When paired with the right proxies, it can be highly effective. Learn more in our guide on integrating Selenium with proxies.

When It Fails: It fails at scale. Running hundreds of headless browser instances is resource-intensive and expensive. Selenium is also slower and more prone to detection by advanced anti-bot systems that analyze browser fingerprints.

To make the decision clearer, here's how they stack up.

Java Scraping Library Decision Matrix

Library | Best For | When It Fails | Performance Overhead | Proxy Integration Complexity |

|---|---|---|---|---|

Jsoup | High-volume static HTML scraping | JavaScript-heavy sites, SPAs | Very Low | Low |

HttpClient | API scraping, reverse-engineering | Complex client-side auth tokens | Low | Low-to-Medium |

Selenium | Complex SPAs, interactive sites | At scale, advanced anti-bot | Very High | Medium |

Start with the simplest tool that gets the job done (Jsoup) and only escalate when necessary. This approach saves significant development time and infrastructure costs. For building sustainable operations, you must incorporate general software engineering best practices. This shifts your focus from a one-off script to a resilient data pipeline.

Proxy Rotation: How It Actually Works (And Fails)

Your proxy rotation strategy is the foundation of a successful scraping operation. Getting it wrong is the fastest way to burn your IP pool and get permanently flagged. It's not just about changing IPs; it's about matching your rotation logic to the target's session requirements.

Per-Request Rotation: This is the standard for stateless scraping, like collecting search engine results or product prices. Every request goes through a new IP. This distributes your footprint across a massive address pool, making it extremely difficult for anti-bot systems to detect a pattern.

Sticky Sessions: This is non-negotiable for any multi-step workflow. If you are logging in, adding an item to a cart, or navigating a checkout process, you must use the same IP for the entire session. Changing IPs mid-session is a rookie mistake that screams "bot!" and triggers instant blocks or CAPTCHAs.

Operator's Insight: A common failure is holding a sticky session for too long. A residential IP that's active for hours on end looks unnatural. Keep sticky sessions brief—a few minutes at most—to mimic real user behavior. After the session, rotate to a new sticky IP. For a deeper dive, read our guide on how to use residential proxies effectively.

Randomizing Headers to Reduce Fingerprinting

Your IP is only one part of your identity. HTTP headers are another critical component that anti-bot systems analyze. Sending the same User-Agent with every request is an amateur move that will get you flagged. A resilient scraper rotates headers in sync with its proxies.

Build a utility in Java to generate realistic header profiles. Don't just randomize the User-Agent; vary Accept-Language, Accept-Encoding, and the increasingly important Sec-CH-UA (Client Hints).

Key Headers to Synchronize:

User-Agent: Use a list of real, current user agents from popular browsers.

Accept-Language: Must match the geolocation of your proxy. A US-based IP should send

en-US.Sec-CH-UA: These "Client Hints" must be consistent with the

User-Agentyou're sending.

The combination of smart proxy rotation and dynamic header management is what separates a fragile script from a professional data collection engine.

Why You’re Still Getting Blocked (The Real Reasons)

If rotating IPs and headers was enough, this job would be easy. The reality is that sophisticated anti-bot systems look far beyond your IP address. Engineering teams constantly hit a wall where they've implemented proxies correctly but still face a 90% failure rate. This happens because their scraper is leaking signals that expose it as automated.

Here’s what’s actually getting you blocked:

Browser Fingerprinting: Headless browsers used by tools like Selenium are notoriously easy to detect. Anti-bot scripts check for JavaScript properties (

navigator.webdriver), analyze canvas rendering inconsistencies, and inspect the list of fonts and WebGL capabilities. If your scraper's fingerprint doesn't perfectly match that of a real user's browser, you're blocked.TLS/JA3 Fingerprinting: The very first connection—the TLS handshake—can give you away. The unique combination of SSL/TLS versions, cipher suites, and extensions creates a signature called a JA3 fingerprint. Standard Java HTTP clients and automation tools have distinct, non-human fingerprints that systems like Cloudflare block on sight.

ASN Reputation: Not all IPs are equal. An IP's Autonomous System Number (ASN) reveals the network it belongs to. An IP from a data center ASN (like AWS or Google Cloud) is treated with extreme suspicion on sites that expect residential traffic. Using datacenter proxies for scraping e-commerce or social media sites is a guaranteed failure.

Bad Rotation Logic: Even with premium residential proxies, flawed implementation will sabotage you. A common mistake is a geo-mismatch: using a German proxy IP while sending an

Accept-Languageheader foren-USwith a New York timezone. Another is poor subnet rotation; cycling through IPs from the same/24subnet is an obvious red flag. Smart rotation requires pulling from a diverse pool of subnets and geolocations. If you find your proxies got banned, your rotation logic is almost always the cause.

Operator’s Takeaway: Your goal is to present a consistent, human-like profile across every layer—from the network (ASN) to the application (headers, browser fingerprint). A single inconsistency is all an anti-bot system needs to shut you down.

Real-World Use Cases for Java Scraping

Let's move beyond theory. Here's how Java scraping plays out in real-world scenarios, including the common failure points.

E-commerce Price Monitoring

Why proxies are required: E-commerce sites aggressively block scrapers to prevent competitive price monitoring and inventory tracking. They employ sophisticated anti-bot services.

What proxy type works: High-quality residential proxies are mandatory. You need IPs that belong to real ISPs to appear as a legitimate shopper.

What fails at scale: Datacenter proxies are blocked almost instantly. Per-request rotation will get you flagged on product pages that require session consistency. You need sticky sessions that last just long enough to gather the data.

What teams underestimate: The sheer complexity of product page variations and the JavaScript required to render prices. Many teams start with Jsoup and fail, then switch to Selenium and struggle with the resource overhead.

Ad Verification and SEO Auditing

Why proxies are required: To check ad placements and SERP rankings from different geographic locations and devices without personalization or IP-based bias.

What proxy type works: A mix of residential and mobile proxies provides the best coverage. You need to simulate real users in specific cities and on specific mobile carriers.

What fails at scale: Using proxies from the wrong geolocation. Checking a London-based SERP with a proxy in Dallas will give you polluted, useless data.

What teams underestimate: The difficulty of maintaining clean, unflagged IPs. Search engines are experts at detecting automated traffic, so a large, diverse proxy pool is essential for accurate data. Learn more about unlocking data at scale with rotating proxies.

Scaling Your Scraper: Concurrency, Rate Limiting, and Deployment

A single-threaded scraper is a toy. To collect data seriously, you need to operate in parallel. But scaling isn't about raw speed; it's about controlled, sustainable throughput.

Managing Concurrency with ExecutorService

In Java, use ExecutorService to manage a pool of worker threads. This gives you precise control over concurrency, preventing your scraper from overwhelming your machine or the target server. A FixedThreadPool fed with scraping tasks is the standard, robust approach.

Implementing Smart Rate Limiting

Concurrency without rate limiting will get your entire IP pool banned. Hammering a server is a rookie mistake. There is no magic number for a "safe" rate; start conservatively (e.g., one request every few seconds per IP) and monitor for errors like 429 Too Many Requests.

Operator's Take:

Thread.sleep()is useless for distributed scraping. You need a centralized rate limiter. Use a tool like Redis with atomic operations (INCR) to create a sliding window counter that coordinates request rates across your entire scraper fleet.

Deployment and Monitoring

For production, containerize your Java application using Docker. This ensures consistency and simplifies deployment to cloud platforms like Azure or orchestration systems like Kubernetes.

Your scraper will fail. It's not a matter of if but when. Implement robust monitoring and alerting for:

Spikes in HTTP errors (4xx/5xx): A clear sign of blocks.

Drops in successful data extraction: Indicates a website layout change.

High proxy failure rates: Signals an issue with your provider or rotation.

Data validation failures: Your scraper might run "successfully" but return garbage data. This is the most dangerous failure mode and requires active data quality checks.

The need for this level of robust data extraction is exploding. The global web scraper software market trends show significant growth, with Java at the forefront of enterprise-level operations.

How to Choose the Right Setup

Budget vs. Reliability: Free or cheap datacenter proxies are a false economy. They will fail on any serious target. The upfront cost of premium residential or ISP proxies pays for itself in higher success rates and reduced engineering time spent fighting blocks.

When NOT to use rotating proxies: If you are accessing a private API with a key or scraping a site where you are logged in with a persistent account, rotating proxies can trigger security alerts. In these cases, a static residential or ISP proxy is a better choice.

Common Buying Mistake: Buying a small, static list of residential proxies. These get flagged and banned just like datacenter IPs. True residential proxy networks offer massive, rotating pools of millions of IPs, which is what you need for sustainable scraping.

Frequently Asked Questions

Is web scraping with Java legal?

It's nuanced. Scraping publicly available data is generally considered legal, but avoid collecting any personally identifiable information (PII) to stay clear of regulations like GDPR. Always respect a site's robots.txt file and Terms of Service. Ignoring them marks you as a hostile actor, even if it's not strictly illegal.

Which is better for scraping: Java or Python?

It depends on the goal. Python, with libraries like BeautifulSoup and Scrapy, is excellent for rapid prototyping and smaller projects. For enterprise-grade, high-performance applications that require robust multithreading and long-term stability, Java's performance and strong typing make it the superior choice for scraping websites with java at scale.

How many requests per second is too many?

There's no single answer. A small blog might struggle with one request per second, while a major e-commerce site won't notice thousands. Start slow (one request every few seconds per IP), monitor for 429 (Too Many Requests) or 503 (Service Unavailable) errors, and only increase the rate gradually once you establish a stable baseline.

Why can't I just use free proxies?

Using free proxies for any serious project is a critical error. They are slow, unreliable, and almost certainly already blacklisted by any target with basic anti-bot defenses. Worse, they pose a massive security risk, as many are honeypots designed to intercept or modify your traffic. For reliability and success, premium residential or ISP proxies are non-negotiable.

Ready to build a Java scraper that can handle any target, no matter how tough? HypeProxies provides the high-performance residential and ISP proxy networks engineered for exactly that. Get the speed, reliability, and clean IPs you need to stop getting blocked and start collecting data successfully. Explore our proxy solutions at HypeProxies

Share on

$1 one-time verification. Unlock your trial today.

Stay in the loop

Subscribe to our newsletter for the latest updates, product news, and more.

No spam. Unsubscribe at anytime.