Beautifulsoup Web Scraping: Why Most Guides Fail at Scale

Most BeautifulSoup web scraping guides show you how to parse a single, pristine HTML page and then call it a day. That approach is a ticket to failure. The second your scraper hits the real, defensive web, you're left with brittle code, a mountain of IP blocks, and incomplete data that renders your project useless.

Gunnar

Last updated -

Feb 5, 2026

Tutorials

As proxy infrastructure operators, we see where these simple guides lead developers astray. They teach parsing but completely ignore the operational nightmare of collecting data at scale. They build your confidence with easy wins but leave you unprepared for the walls you're about to hit: aggressive anti-bot systems, JavaScript-rendered content, and the infrastructure demands of scraping millions of pages.

This guide is different. We're skipping the basic "how to parse a <div>" examples to focus on building resilient scraping logic that anticipates failure and is actually built to work in the real world.

What is BeautifulSoup and Why Does It Matter?

BeautifulSoup is a Python library for parsing HTML and XML documents. It creates a parse tree from page source code that can be used to extract data in a hierarchical and more readable manner.

In practice, its job is to make sense of messy, real-world HTML so you can pinpoint and extract the exact data you need, like product prices, article text, or contact information. It's an excellent parser, but it's just one piece of the scraping puzzle—it can't fetch the web page or handle network-level challenges.

How BeautifulSoup Scraping Actually Works (And Where It Breaks)

At its core, a BeautifulSoup scraping operation involves two steps:

Fetch: An HTTP client (like Python's

requestslibrary) sends a GET request to a URL. The server responds with the raw HTML content.Parse: BeautifulSoup takes that raw HTML and transforms it into a navigable Python object, allowing you to search and extract data using tags, classes, or IDs.

This sounds simple, but the "fetch" step is where most projects fail. The quality of the HTML you get depends entirely on the quality of your request. A naive request from a datacenter IP with a default User-Agent is an immediate red flag for any modern anti-bot system.

This is where proxy infrastructure becomes critical. Integrating a proxy pool allows your scraper to route requests through different IP addresses, making it appear as organic user traffic. However, how you manage that rotation is crucial.

Per-Request Rotation: Assigning a new IP for every single request works for scraping static, independent pages like product listings. However, it's unnatural and easily detected.

Session-Based Rotation (Sticky Sessions): For any multi-step process (like a checkout flow or navigating behind a login), you must maintain the same IP across multiple requests. Abruptly changing IPs mid-session will invalidate your session and get you blocked. This is a common failure point teams underestimate.

Furthermore, concurrency and rate limits are operational realities. Sending too many requests from a single IP in a short period will trigger rate-limiting blocks, regardless of how good your parser is. Effective scraping requires distributing requests across a large, clean IP pool. Our operator's guide to rotating proxies for web scraping covers these infrastructure challenges in detail.

BeautifulSoup with Python: Code Examples That Don't Break

A production-ready scraper is built on a foundation of clean code and defensive logic. This starts with a proper environment and extends to how you handle requests and parse data. Running pip install beautifulsoup4 in your global environment is a recipe for dependency hell—always use a virtual environment. It's a non-negotiable step based on fundamental software engineering best practices.

Environment Setup

Create and activate an isolated Python environment to manage your dependencies cleanly.

Writing a Resilient HTTP Request Function

Never send a request without a realistic User-Agent and a timeout. A default requests call screams "bot." This function includes basic error handling and proxy integration—the minimum for any serious scraper.

This request layer is where you integrate your proxy logic. For a deep dive, see our guide on how to use residential proxies.

Parsing Defensively

The most common failure in parsing is assuming an element exists. If BeautifulSoup returns None, calling a method like .text on it will crash your script. Always check for existence before extracting data.

This defensive approach—checking for None and returning a default value—prevents crashes and makes your data cleaner by explicitly marking where extraction failed.

Why You're Still Getting Blocked (It's Not Just Your IP)

Teams invest in top-tier residential proxies and still get blocked. Why? Because sophisticated anti-bot systems look far beyond your IP address. They analyze the entire digital signature of your request, and if it looks robotic, you're flagged. Rotating IPs with a default requests setup is like putting a fake license plate on a one-of-a-kind car—it’s not fooling anyone.

Here’s what’s really getting you caught:

Browser Fingerprinting: Modern systems analyze your TLS/JA3 fingerprint, HTTP/2 settings, and client hints. If your "Chrome on Windows" User-Agent is paired with a TLS handshake that screams "Python script on Linux," you are immediately identified as a bot.

Header Entropy: Real browsers send headers in a slightly variable order. Sending the exact same header block in the exact same order for thousands of requests is a dead giveaway.

ASN Reputation: Not all IPs are equal. An IP's Autonomous System Number (ASN) reveals its origin (e.g., a known datacenter vs. a residential ISP). Requests from datacenter ASNs are treated with high suspicion for scraping residential-gated content.

Automation Tool Detection: Headless browsers like Selenium and Playwright leave traces (like the

navigator.webdriverflag in JavaScript). Stealth versions of these tools are required to evade detection.Bad Rotation Logic: Using a new IP for every asset on a page (CSS, images, JS) is unnatural user behavior and an easy way to get your entire proxy subnet banned. You need a mix of per-request rotation and sticky vs rotating proxies for multi-step actions. Our support team often sees users get blocked because of this; read about why their proxies got banned.

Successful scraping at scale is about mimicry. Your entire request stack—from the IP's ASN to the TLS handshake—must tell a consistent, human-like story.

Real-World Use Cases (And What Actually Works)

Generic lists of use cases are useless. Here’s a breakdown of what works and what fails for common scraping targets.

Use Case | Why Proxies are Required | What Proxy Type Works | What Fails at Scale | What Teams Underestimate |

|---|---|---|---|---|

E-commerce Price Monitoring | To avoid rate-limiting and access geo-specific pricing. | High-performance Residential Proxies (per-request rotation). | Datacenter proxies (easily blocked), slow residential IPs. | The need to solve CAPTCHAs and parse inconsistent HTML structures across thousands of products. |

SEO & SERP Data Collection | To bypass search engine blockades (like Google's) that trigger after a few queries from one IP. | ISP Proxies or high-quality residential proxies with geo-targeting. | Shared/datacenter IPs (instantly flagged by Google), not handling CAPTCHAs. | The complexity and cost of solving CAPTCHAs at volume. Google's anti-bot is world-class. |

Social Media Data Aggregation | To scrape profiles and posts without triggering account bans or login walls. | Residential Proxies with sticky sessions to maintain a logged-in state. | Per-request rotation (invalidates sessions), datacenter IPs (blocked by platforms). | The challenge of mimicking API calls and handling dynamic, JavaScript-heavy interfaces. |

Ad Verification | To check ad placements from different geographic locations and device types. | Mobile & Residential Proxies with precise geo-targeting. | Datacenter proxies (don't reflect real user traffic), proxies without mobile IP options. | Fingerprint consistency. The ad network checks if your device profile matches your IP location and type. |

Choosing the Right Setup: Decision Rules

Your toolchain depends entirely on your target's complexity and your project's scale.

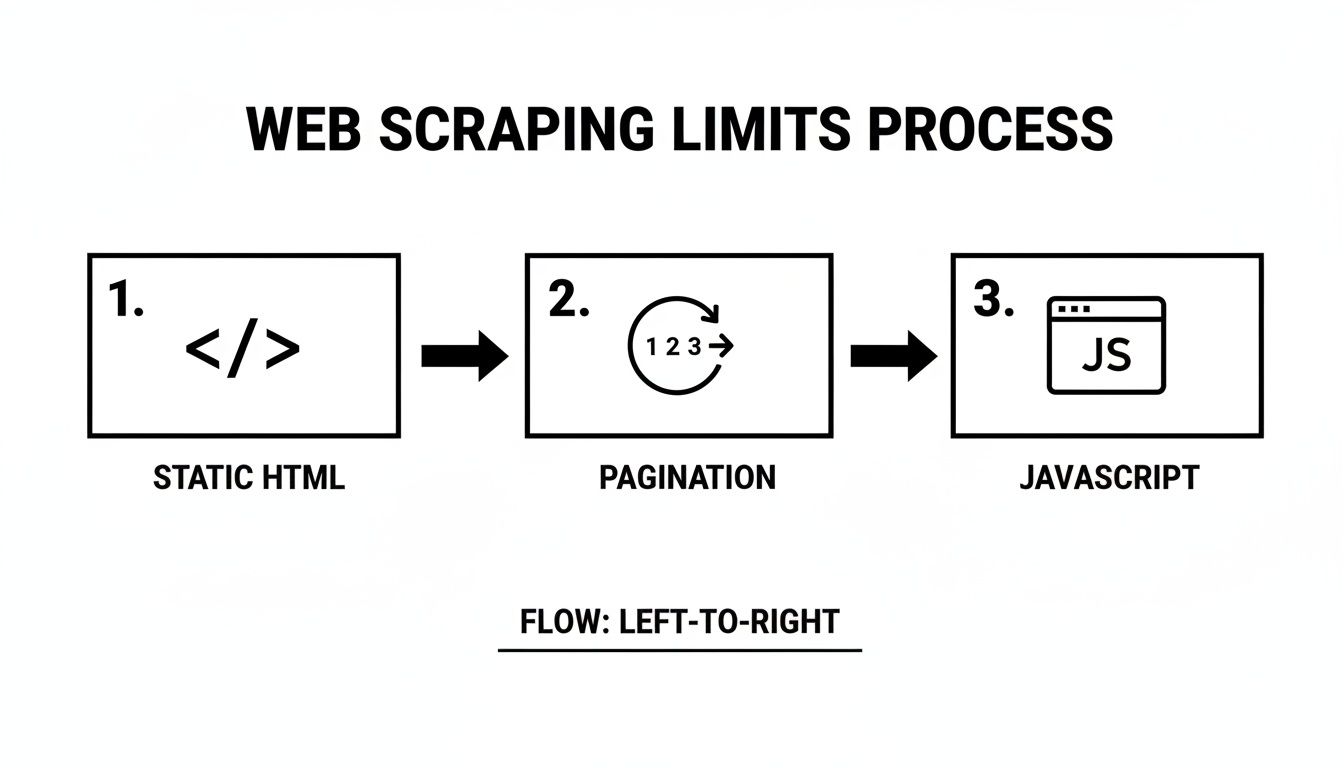

Is the content static HTML?

Yes:

requests+BeautifulSoupis a viable starting point.No (content loads via JS): You must use a headless browser like Selenium or Playwright, or a framework like Crawlee. BeautifulSoup alone will fail. For an overview, you can explore the full breakdown of web scraping libraries.

What is your scale?

< 1,000 pages/day: A simple Python script might suffice, but it will be slow.

> 10,000 pages/day: The synchronous nature of

requestsis a major bottleneck. You must move to an asynchronous framework like Scrapy for performance. Arequests-based script is up to 39 times slower than Scrapy at scale. read the full performance analysis.

What is your budget vs. reliability tolerance?

Low budget, high failure tolerance: You might try datacenter proxies, but expect high block rates and data gaps.

High reliability required: Invest in premium Residential or ISP proxies. The higher cost is offset by dramatically better success rates and cleaner data.

Common Buying Mistake: Don't buy a massive proxy pool before you've optimized your scraper's fingerprint. Fix your headers, TLS signature, and rotation logic first. Otherwise, you're just burning through clean IPs with a leaky engine.

Ready to overcome performance bottlenecks and scale your data collection without getting blocked? HypeProxies provides the high-speed, reliable residential and ISP proxy infrastructure your team needs to succeed. Explore our enterprise-grade proxy solutions and start scraping smarter, not harder.

Share on

$1 one-time verification. Unlock your trial today.

Stay in the loop

Subscribe to our newsletter for the latest updates, product news, and more.

No spam. Unsubscribe at anytime.